Internet Standards and Age Verification Architecture

This is a working Internet-Draft submitted to the Internet Engineering Task Force (IETF), the body responsible for developing the open standards that the internet runs on. Before a draft becomes a standard, it is published for review and comment by the wider technical and policy community. This draft is at that stage now.

It is authored by Mallory Knodel of the Social Web Foundation, Gianpaolo Angelo Scalone of Vodafone Group, Tom Newton of Qoria, and Audrey Hingle of Exchange Point, bringing together expertise across civil society, telecommunications, child safety technology, and internet infrastructure.

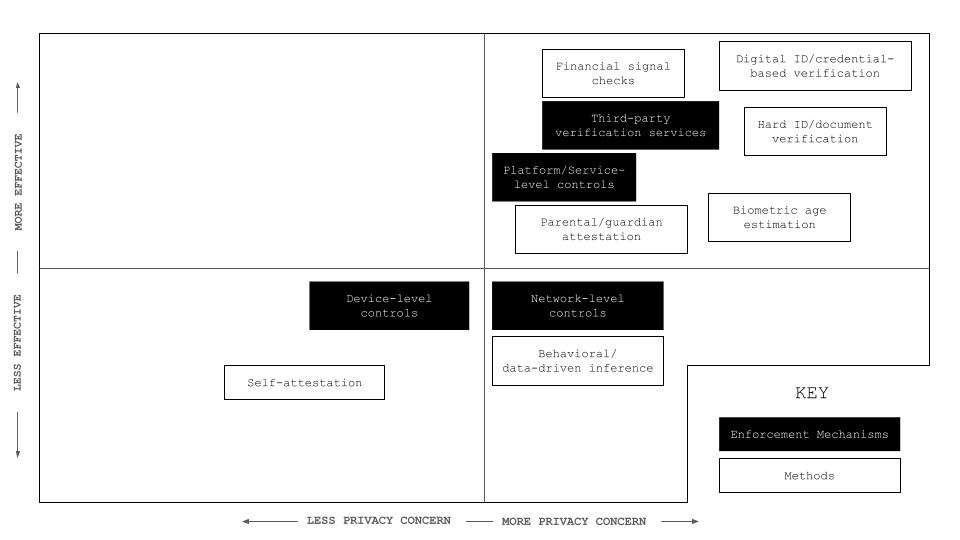

If you are working on age assurance policy, regulation, or implementation, this draft describes the technical landscape in terms that policy discussions can engage with directly. It sets out a solution-agnostic and technology-neutral framework for how various intermediaries can gate content and services based on age, analyses the effectiveness of each approach, and covers privacy, security and human rights considerations that any system will need to address.

Many age verification methods conflict with data protection principles and pose serious safety, security, and privacy risks. Requiring all users on all platforms to submit verifiable credentials can create large, sensitive data stores in centralised intermediaries that are vulnerable to breaches, fraud, or misuse. Once compromised, this information is difficult if not impossible to secure again.

Reducing harm to children on the internet requires an incremental, all-hands approach and cannot be solved by age verification alone. A more resilient approach relies on a plurality of mechanisms operating at different layers of the internet architecture, each limited in scope and aligned with privacy-by-design principles.

Not all platforms are the same

Age-assurance mechanisms cannot be applied uniformly across the internet because different platforms have different relationships with their users, with personal data, and with the law.

Core internet infrastructure should remain neutral. Asking networks to make decisions about individual users introduces censorship risks. Government services, by contrast, are already identity-bound by law and can treat age as part of an existing verified identity framework. Essential services such as banking, healthcare, and education must stay broadly accessible, so the bar for age assurance here should be low.

General-use platforms are the most contested area. Social media, messaging, gaming, and app stores mix adult and minor audiences at scale across many jurisdictions. Each has its own enforcement logic: a messaging platform cannot inspect private content, so it relies on account or device-level signals; a gaming platform can use guardian consent and payment signals; an app store can enforce consistent age labels that flow through to the apps it distributes.

What counts as adult content also varies by jurisdiction. Material classified as restricted in one country may be considered normal commercial or artistic content in another. Any system has to account for this rather than impose a single global classification.

Comments ()